The AQAvit project was created to “make quality certain to happen.” AQAvit verification is achieved through the process of running and passing a prescribed and versioned set of tests in the AQAvit test suite. The Eclipse Foundation Quality Verification Software License (QVSL) requires those who wish to verify their product to share a summary of the test results by which verification was achieved. This document describes how to run the AQAvit verification tests, check verification has passed, and collect the required test results for publication.

AQAvit verification is one of the criteria for listing in the Adoptium Marketplace. Leveraging the AQAvit test suite to ensure the quality of the binaries listed in the Adoptium Marketplace not only communicates to consumers how serious we are about quality, but also consolidates the good verification practices of the Adoptium Working Group members under a centralized effort. AQAvit aligns its test suite standards with the ever-changing requirements of the user base.

Overview

The AQAvit test suite is a large set of tests, many contributed to the AQAvit project and some pulled from a variety of open-source projects, that serve to verify the quality of OpenJDK binaries. The suite is suitable for testing Java SE 8 or higher versions on all supported platforms. To verify binaries, testers clone a specified release of the aqa-tests github repository (the latest stable release of the aqa-tests repository), configure their test environment, execute and pass the required test targets against each binary they plan to verify and make the results of those test runs available as per the QVSL.

AQAvit Test Targets to Run

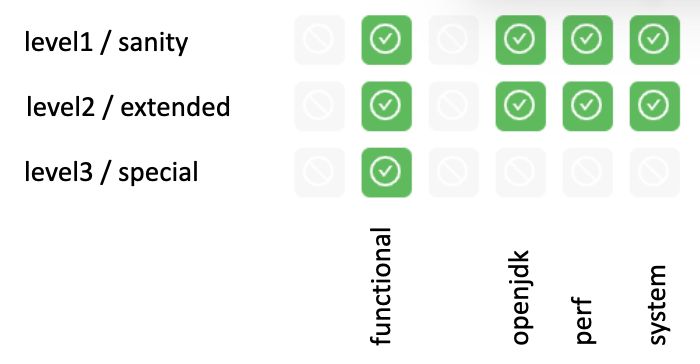

The tests are divided into different groups and those groups are split into 3 levels. This logical categorization of the tests provides flexibility and granularity and can be visually represented in a grid.

For the current release of AQAvit, the required set of top-level test targets to run are [sanity.functional, extended.functional, special.functional, sanity.openjdk, extended.openjdk, sanity.system, extended.system, sanity.perf, extended.perf]. In subsequent AQAvit releases, targets will be added to raise quality bar even higher.

Details Regarding Test Execution

AQAvit can be run in various CI/CD environments as well as directly via command-line in a container or on a test machine that has the test prerequisites installed. The basic steps are the same in any environment.

git clone --depth 1 --branch v0.9.6-release https://github.com/adoptium/aqa-tests.git

export TEST_JDK_HOME=

export USE_TESTENV_PROPERTIES=true

export JDK_VERSION=17

export JDK_IMPL=hotspot

export BUILD_LIST=functional

cd aqa-tests

./get.sh

./compile.sh

cd TKG

make _sanity.functional

…

make _extended.system

Collect *.tap file and metadata file

Archive .zip

Publish .zip The AQAvit project created a Github action that allows for running the AQAvit test suite from workflow files. The run-aqa action in the run-aqa repository allows users to pass in custom OpenJDK binaries for verification. Here is an example workflow file that can run sanity level targets on the 3 supported platforms available as Github runners:

name: Run AQAvit

on:

workflow_dispatch: # Allows the job to be manually triggered

env: # Links to the JDK build under test and the native test libs

USE_TESTENV_PROPERTIES: true

BINARY_SDK: https://github.com/adoptium/temurin11-binaries/releases/download/jdk-11.0.14.1%2B1/OpenJDK11U-jdk_x64_linux_hotspot_11.0.14.1_1.tar.gz

NATIVE_LIBS: https://ci.adoptium.net/job/build-scripts/job/jobs/job/jdk11u/job/jdk11u-linux-x64-hotspot/lastSuccessfulBuild/artifact/workspace/target/OpenJDK11U-testimage_x64_linux_hotspot_2022-02-12-17-06.tar.gz

jobs:

run_aqa:

runs-on: ubuntu-latest

strategy:

fail-fast: false

matrix:

target: [sanity, extended]

suite: [functional, openjdk, system, perf]

include:

- target: special

suite: functional

steps:

- name: Run AQA Tests - ${{ matrix.target }}.${{ matrix.suite }}

uses: adoptium/run-aqa@v2

with:

version: '11'

jdksource: 'customized'

customizedSdkUrl: ${{ env.BINARY_SDK }} ${{ env.NATIVE_LIBS }}

aqa-testsRepo: 'adoptium/aqa-tests:v0.9.6-release' # Make sure this branch is set to the latest release branch

build_list: ${{ matrix.suite }}

target: _${{ matrix.target }}.${{ matrix.suite }}

- uses: actions/upload-artifact@v2

if: always() # Always run this step (even if the tests failed)

with:

name: test_output

path: ./**/output_*/*.tapIf you are using the AQAvit Jenkins test pipeline code available from the aqa-tests repository and described in the documentation under the Jenkins Setup and Running section, then these are additional parameters that you will set in order to run the required test targets.

# Set Jenkins job parameters

ADOPTOPENJDK_REPO=https://github.com/adoptium/aqa-tests.git

ADOPTOPENJDK_BRANCH=v0.9.6-release

USE_TESTENV_PROPERTIES=true

# Execute test targets

TARGET=sanity.functional and subsequently [extended.functional|special.functional|sanity.openjdk|extended.openjdk|sanity.system|extended.system|sanity.perf|extended.perf]

# Collect and publish results

Collect *.tap file and metadata file

Archive .zip

Publish .zip The .tap files and metadata files contain timestamps and information about the binary under test. This information is collected from java -version output, release file information and querying some system properties during the test run. Where applicable, the information should match with the binary listed in the marketplace.

Verifying Results

The AQAvit test suite produces test result files and metadata files at the end of the test execution. Upon running and passing each of the nine required test targets, the result files and metadata files are to be gathered and shared. For test targets that contain failures, the root cause of the failure should be addressed and the target can be rerun and an updated test result file produced and shared.

Test result files that are produced follow a certain naming convention and use a simple TAP (Test Anything Protocol). When top-level targets are run serially, a single .tap file is produced, for example:

Test_openjdk11_hs_sanity.system_aarch64_linux.tap

contains version, impl/distribution, test target and platform information in the name, and its contents look like:

# Timestamp: Wed Mar 2 10:51:55 2022 UTC

1..168

ok 1 - MachineInfo_0

---

duration_ms: 581

...

ok 2 - ClassLoadingTest_5m_0

---

duration_ms: 304339

...

ok 3 - ClassLoadingTest_5m_1

---

duration_ms: 303883

...

etc.

...

ok 168 - MauveMultiThrdLoad_5m_1

---

duration_ms: 304296

...One can see in this example that the top-level target sanity.system contains 168 sub-targets. Of the set of expected subtargets, some may be 'skipped' due to being filtered out as not applicable for a particular version or platform, but there must be none that failed. Within the tap file, they will show as 'not ok' if they have failed. Failing subtargets can be rerun individually and the tap file produced for that individual run can be included in the <results>.zip file to indicate that the binary under test was able to pass all expected targets.